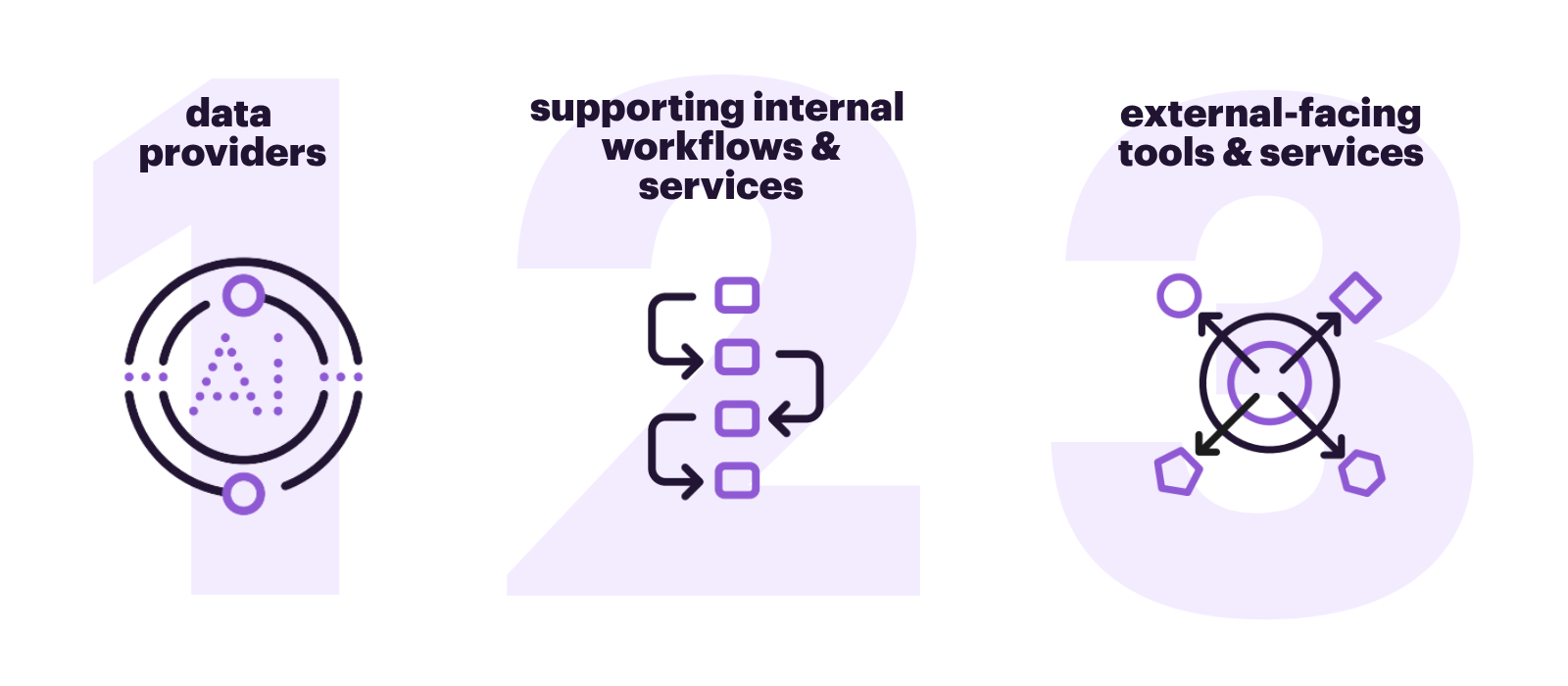

AI has become an integral part of how research is shared and improved—supporting everything from editorial workflows to personalized discovery. It supports:

- Article recommendations and dynamic classification

- Enhanced search and browse functionality

- Image validation and annotation

- Authorship and editorial matching

- Content enrichment and structuring

Such integration enables better access to knowledge and streamlines the dissemination of trustworthy research. (Source: AI Ethics in Scholarly Communication).

The role of AI in peer review is at the center of an active and rapidly evolving debate. Perspectives are shifting, and the following resources offer valuable insights to help navigate this critical conversation.

Our members have long pioneered the responsible use of AI, applying it to content creation, workflow optimization, and the development of innovative tools.

Clarivate

Elsevier

- ScienceDirect AI: Eureka, every day | Elsevier

- Elsevier takes Scopus to the next level with generative AI

- Elsevier introduces Embase AI to transform how users discover, analyze and draw critical insights from biomedical research

- Elsevier introduces Reaxys AI Search, enabling faster and more accessible chemistry research through natural language discovery

- LeapSpace | The research-grade AI workspace

OUP

Research Information

Silverchair

- Silverchair’s AI Lab Delivers Oxford Academic AI Discovery Assistant for Dynamic Research Support

- Silverchair Launches the Discovery Bridge MCP to Connect Scholarly Content with AI-Powered Research Workflows – Silverchair

Springer Nature

- Springer Nature publishes its first machine-generated book

- Springer Nature advances its machine-generated tools and offers a new book format with AI-based literature overviews

Taylor & Francis

- New Plain Language Summaries of Publications Unlock the Latest Medical Research for Patients, Healthcare Professionals and Policymakers – Taylor & Francis

- Taylor & Francis to make more translated books available more quickly using advanced AI

Wiley

- Wiley + Perplexity: Powering AI-Driven Learning

- Wiley Announces Collaboration With Amazon Web Services (AWS) to Integrate Scientific Content Into Life Sciences AI Agents | John Wiley & Sons, Inc.

- Wiley Announces Collaboration With Amazon Web Services (AWS) to Integrate Scientific Content Into Life Sciences AI Agents | John Wiley & Sons, Inc.

- Wiley Partners with Anthropic to Accelerate Responsible AI Integration Across Scholarly Research | John Wiley & Sons, Inc.

To fulfill its promise, AI must be used responsibly and in alignment with the ethos of science. STM advocates for:

- Accuracy and reliability: AI should operate on the final version of record (VoR) to ensure the most vetted, updated research is used.

- Transparency and provenance: Systems must disclose sources, training data, and provide traceable references to maintain scholarly integrity. Many scientists are already concerned about downstream reuses of their works due to possible misrepresentations or misuse of their data for political gain.

- Human oversight: Despite AI’s capabilities, human expertise remains essential to uphold the quality, trust, and accountability of scientific publishing.

Source: AI Ethics In Scholarly Communication

Also reference: Recommendations for a Classification of AI Use in Academic Manuscript Preparation

Academic publishers are compiling guidelines for their authors providing guidance on correct and transparent use of AI in preparing manuscripts for publication. Reference: Policies on artificial intelligence chatbots among academic publishers: a cross-sectional audit | Feb 2025, Springer

Despite all the potential gains, AI could also negatively affect knowledge production and dissemination in the research ecosystem if not handled carefully – and exacerbate an already pervasive blur between fact and fiction.

AI’s capabilities can amplify misinformation, especially when used without proper guardrails. The “hallucination” problem—where AI generates false or misleading content—poses a threat to scientific credibility and public trust.

Much like the spread of fake news through social media, scientific misinformation could erode societal trust in research and decision-making. Responsible stewardship is vital to counter these risks.

Society and policy-makers need to be able to trust scientific information to make evidence-based decisions, and researchers need to be able to drive innovation and discoveries that are so central to competitiveness and other societal benefits.

Recognizing the dual nature of AI, STM is leading initiatives that use AI to protect research integrity. The STM Integrity Hub exemplifies this approach, offering:

- A shared, cloud-based infrastructure for detecting integrity issues

- Integration with trusted tools like Springer Nature’s AI-powered text detection system

- A human-in-the-loop model to ensure editorial discretion and accountability

This balanced approach ensures AI supports—not replaces—the essential work of human reviewers and editors.

Explore the STM Integrity Hub | A unified approach to safeguard research integrity

LEARN MORE

The latest AI news from STM

Book, news, and journal publishers join with authors in amicus brief in support of music publishers in Concord v. Anthropic

On March 30, 2026, the Association of American Publishers (AAP), News/Media Alliance (N/MA), International Association of Scientific, Technical & Medical Publishers (STM), and Authors Guild (AG) filed an amicus brief supporting the plaintiffs in Concord Music Group v. Anthropic. This case was brought in October 2023 by several music publishers alleging that Anthropic unlawfully used…

STM publishes new discussion document on responsible use of research content in generative AI

STM has published “Toward Responsible Use of Research Content in Generative AI,” a discussion document putting forward considerations for the responsible use of research content in generative AI tools, and inviting the broader research and GenAI development community to engage. The document focuses on what makes research content and research communication distinct from other types…

STM supports transparency in AI training

STM has expressed support for Congressional efforts to legislate on AI transparency, with several bills proposed to require AI developers to disclose the use of copyrighted material. The TRAIN Act grants rightsholders the ability to petition courts to subpoena developers to release generative AI training data. The CLEAR Act would require generative AI developers to disclose, available via a…

Global reporting standard for AI disclosure in research: first consultation is open

Transparency about the use of generative Artificial Intelligence (AI) in research articles and other scholarly outputs is an important aspect of research integrity. At present, practices for how to disclose AI use vary widely across disciplines, regions, and publication cultures. To address this issue, STM has released a report “Recommendations for a Classification of AI…

JOIN

JOIN